What Does It Mean for AI to Be a Cognitive Tool?

You open ChatGPT when the blank page won’t move, when an email feels risky, or when a plan has too many loose parts. The useful moment isn’t “write this for me.” It’s when you need a second surface to think on fast.

As a cognitive tool, AI helps you offload mental steps: outlining, listing options, turning vague goals into constraints, and spotting missing questions. It’s closer to a structured scratchpad than an answer key. You still decide what “good” means, what you can’t assume, and what you’ll do next.

If you don’t give context and push back, it will fill gaps with confident guesses. That’s why the real skill is a repeatable loop that reduces uncertainty, not just generates text.

How AI Extends Human Memory and Information Processing

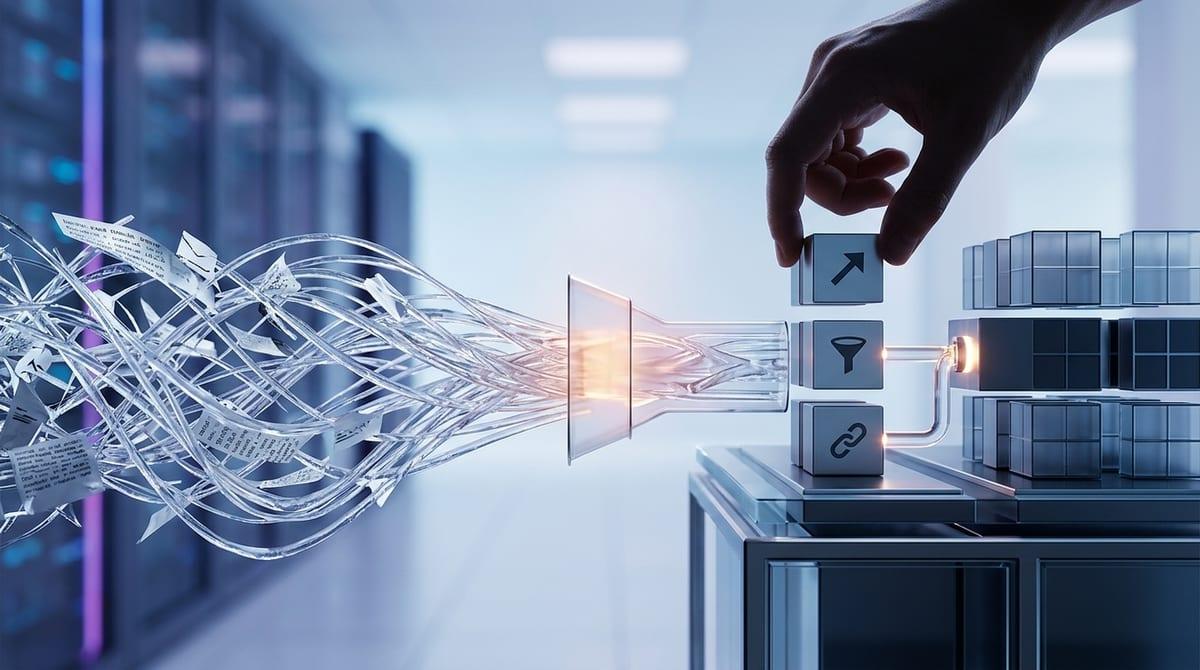

That loop gets easier when you treat AI like working memory you can pull up on demand. In a typical week, you’re juggling notes from meetings, half-formed ideas, and scattered docs. AI can hold the threads in one place: summarize a long brief into “what matters,” extract decisions and open questions from a call transcript, or build a quick table of options and criteria from your messy bullet points.

It also speeds up basic processing you’d otherwise do by hand. If you paste in three competing proposals, you can ask for a side-by-side comparison, then follow with “what would change your recommendation?” to surface assumptions. The limitation is obvious in practice: if the inputs are thin or outdated, the output will sound organized but won’t be grounded. You still need to supply sources, dates, and constraints—then use the AI’s structure to decide what to verify.

Enhancing Problem-Solving Through AI Assistance

That “decide what to verify” move is where AI starts to feel like a problem-solving partner instead of a writing shortcut. In a familiar situation—say you need to pick a campaign direction by Friday—you can feed the messy inputs and ask for a decision frame: goal, constraints, success metric, and the few variables that actually change the outcome. Then push for options: “Give me 6 approaches, each with a one-sentence rationale, the key assumption, and the easiest test.”

When you’re stuck, use AI to generate pressure, not polish. Ask for a pre-mortem (“If this fails in 60 days, what likely caused it?”), an argument map (“strongest case for/against”), and a short list of disconfirming questions to take to your team or your data.

If you don’t provide constraints like budget, audience, and timing, you’ll get a wide menu that still leaves you guessing. Tight prompts turn that menu into a few workable bets—and set up a cleaner handoff between your judgment and the tool.

AI and Augmented Thinking: Collaboration Between Humans and Machines

That handoff works best when you split roles on purpose: you provide intent and constraints, and the AI provides structure and variation. In practice, this looks like drafting a one-paragraph “decision brief” (what you’re deciding, by when, what can’t change), then using the model to expand the space: options, risks, metrics, and what you’d need to learn to choose.

For example, if you’re rewriting a positioning page, ask for three versions aimed at different buyer mindsets, then follow with: “What’s the implied promise in each, and what proof would a skeptical customer demand?” You’re not delegating taste. You’re forcing clarity, then checking if the words match the reality of your product and evidence.

If you don’t feed it real inputs—current numbers, actual objections, the legal constraints—it will invent plausible glue. That’s where the limits show up fast.

The Limits of AI as a Cognitive Tool

Those invented “plausible glue” moments usually show up when you ask for something that depends on facts: current pricing, what a competitor just launched, what your customers actually said, or what your company policy allows. If the model doesn’t have the exact inputs, it will still complete the pattern. The writing can look clean while the foundation is missing. That’s not a moral failing; it’s a predictable failure mode when you treat it like a live database instead of a reasoning aid.

It also struggles with your hidden constraints. Ask for “a plan to increase retention,” and it may propose moves your team can’t execute (no engineering time, no budget, compliance review required). You have to pin it down with real limits and real timelines, or you’ll waste time evaluating ideas that were never feasible.

Finally, AI can’t own the call. It can map options and stress-test assumptions, but it can’t decide what you value, what risk you’ll carry, or what evidence is “enough.” If you want it to reduce uncertainty, you have to force a verification step—and accept that some outputs should trigger a stop, not a sprint.

Risks of Over-Reliance on AI in Thinking Processes

That “stop, not a sprint” moment gets rarer when AI becomes your default move. You ask for an answer before you’ve named the decision, the stake, or the constraint that actually binds. The output feels productive, but it can quietly swap your problem for an easier one—like optimizing wording when you really needed to choose a strategy.

Over-reliance also dulls your internal checks. If you don’t pause to ask “what would I accept as proof?” you’ll start treating confident phrasing as evidence. In a forecast, that can mean copying a neat set of assumptions into a deck without noticing one is impossible given your pipeline history. In policy work, it can mean missing a compliance constraint because the model never saw it.

You can burn an hour iterating prompts to get a “smart” answer instead of spending ten minutes pulling the one data point that would settle the question. The safer habit is to use AI to surface what to verify, then go verify it.

How to Use AI Effectively to Strengthen (Not Replace) Your Thinking

Using AI to surface what to verify only works if you run it like a quick checklist, not a conversation. Start with a two-line brief: “Decision: ____ by (date). Constraints: ____. Success looks like ____.” Then ask for structure: “Give me 3 options, the key assumption behind each, and the fastest test I can run this week.”

Before you act, pressure-test: “What would make each option fail? What am I ignoring? What evidence would change the recommendation?” Treat anything factual as a todo list: “List claims that require sources, and suggest where to confirm.”

The hard part is discipline. If you can’t name the constraint or pull the data, stop prompting and go get it.