What Is Model Interpretability and Why It Matters

You’re about to ship an AI feature, and someone asks a simple question: “Why did it do that?” If the only honest answer is “because the model says so,” you may still launch—but you’ll struggle in reviews, incident response, and customer conversations.

Model interpretability means you can explain, in plain terms, which inputs drove an output and how strong those drivers were. That can be a global story (“what generally matters”) or a case-by-case story (“why this user got this result”). It matters for debugging, spotting bias, meeting policy needs, and defending decisions when users appeal or regulators ask.

More interpretable approaches can cap accuracy or require extra work to keep explanations stable and reliable. That tension sets up the real decision.

What Defines AI Model Performance in Real-World Applications

That decision usually starts with a familiar moment: you hit a strong validation score, then the pilot goes live and complaints show up. In real products, “performance” isn’t one number. It’s whether the model helps users complete a task with fewer errors, less time, and fewer bad edge cases that blow up support.

Measure what the product actually pays for. If a false positive creates a manual review, track precision at the operating threshold and cost per case. If missing a fraud event is worse than blocking a good user, weight recall accordingly and test across segments, not just overall averages. Also watch stability: does the model hold up when traffic, seasonality, or input formats shift?

Getting real-world labels can take weeks, and monitoring adds ongoing work. But without those signals, “better” performance is easy to claim and hard to defend.

Why Interpretability and Performance Often Conflict

That “hard to defend” feeling shows up fast when the model that wins on metrics is also the one you can’t explain without hand-waving. In practice, the best-performing models often lean on thousands of small signals and their interactions. That’s great for squeezing out accuracy, but it makes it difficult to say which input “caused” the output in a way a reviewer, auditor, or customer will accept.

Interpretability usually asks for constraints: fewer features, simpler structures, monotonic rules (“more income shouldn’t lower approval”), or clear reasoning paths. Those constraints can block useful patterns, like non-linear effects or combinations (“late payments matter more when utilization is high”). You can add post-hoc explanations, but they can shift when you retrain, change thresholds, or see new data, which creates its own risk during incidents.

Every new feature or model update becomes an explanation update, a doc update, and often a policy review. That’s why it helps to compare the typical model options side by side.

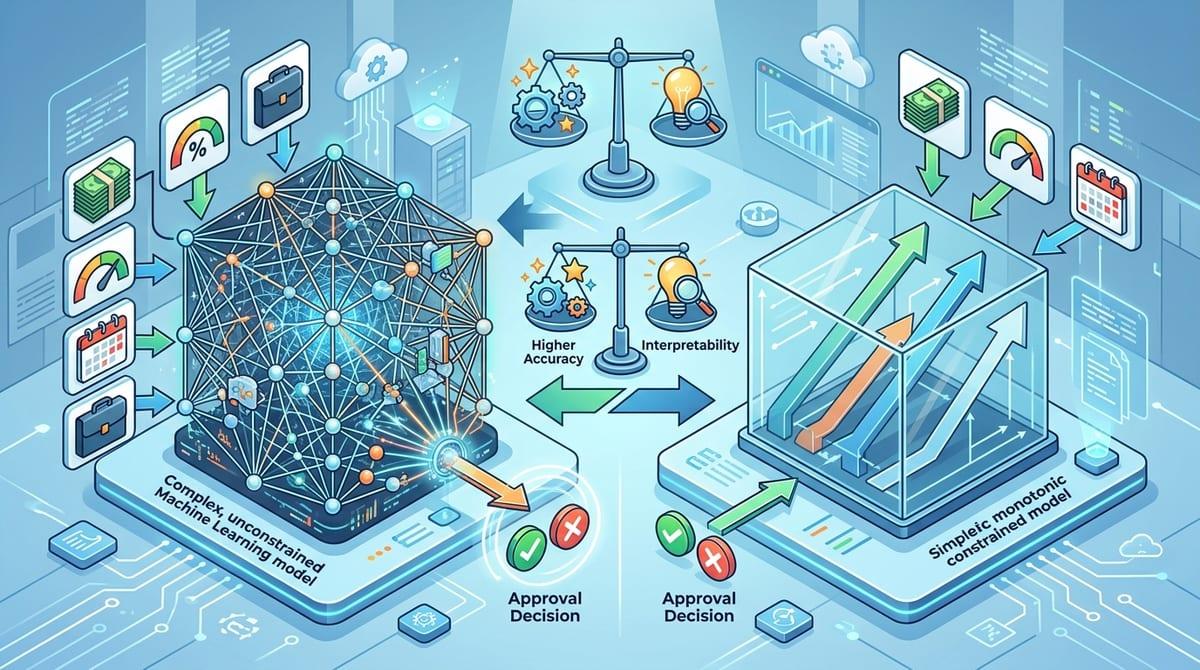

Comparing Interpretable vs High-Performance Models

Side by side, the choice often looks like this: a simpler, interpretable model (linear/logistic regression, decision tree, scorecard, monotonic gradient boosting) versus a higher-performing model that’s harder to justify (deep nets, complex ensembles, large feature sets with heavy interactions). In a loan pre-check, the interpretable option can tell you “utilization and missed payments drove this,” and you can test rules like “more income shouldn’t reduce approval.” That makes reviews and appeals faster.

The black-box option may cut defaults or fraud a bit more, especially when weak signals add up. But when a VIP customer gets blocked, you may only have a ranked list of correlated features, not a clear reason. That slows incident response and creates inconsistent explanations across retrains.

Interpretable models usually need stricter feature hygiene (clean, stable inputs) to stay trustworthy, while black boxes demand heavier monitoring and rollback plans. The next step is learning which techniques let you borrow some clarity without giving up all the lift.

Techniques to Improve Interpretability Without Losing Too Much Performance

Borrowing some clarity usually starts when you keep the stronger model but tighten how it’s allowed to behave. If you’re using gradient boosting, add monotonic constraints where the relationship should only go one way (for example, more verified income shouldn’t lower approval). If feature sprawl is the problem, cap interactions, group related inputs into stable aggregates (like “recent payment issues”), and drop fields you can’t explain or reliably collect.

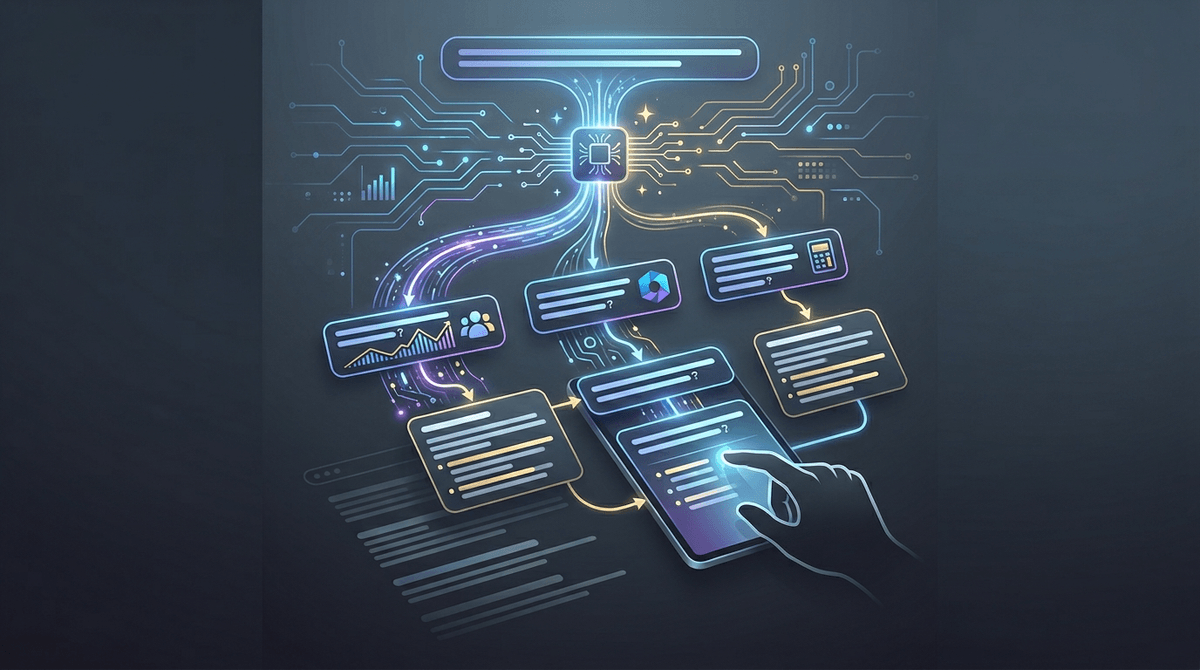

When you need a clearer story, use a two-model setup: train the high-performance model, then distill it into a simpler surrogate (like a compact tree or scorecard) for review and support. You can also use local explanations (SHAP, counterfactual examples) to answer “what changed this decision?” but treat them as diagnostic evidence, not a legal-grade reason code. They can shift after retraining or small data changes.

Choosing the Right Balance for Different Use Cases

Sign-off usually forces the choice. If your feature can deny access, change pricing, trigger enforcement, or create a user appeal queue, default to a model you can explain with stable reason codes and testable constraints. In practice that means: document allowed inputs, lock monotonic rules where they must hold, run slice tests for protected or high-risk segments, and keep a human-review path for borderline cases.

If the output is assistive (ranking, drafting, routing) and the cost of a wrong answer is limited, a higher-performing black box can be acceptable—if you can prove control. Collect evidence like offline cost curves at the chosen threshold, shadow launches, drift and data-quality alerts, and a rollback plan tied to concrete metrics (complaints, manual reviews, loss rates).

Future Trends: Toward Explainable and High-Performance AI

As explainability tooling and standards mature, the bar will rise from “we can generate an explanation” to “we can prove it stays consistent under retrains, threshold changes, and new traffic.” Expect more baked-in constraints (monotonicity, reason-code templates, input allowlists) and more “evidence packets” (model cards, data lineage, slice tests) that ship alongside the model, not after an incident.

You’ll also see more hybrid designs: a strong model for scoring, plus a simpler policy layer for decisions and user-facing reasons, with audits on both. Retrieval-based features can cut reliance on brittle feature interactions, but they add failure modes like stale sources and missing citations.

Operating principle: choose the model you can continuously defend, not the one that wins a single benchmark.