The first time an LLM “gets it” — and why that reaction matters

You paste a messy meeting transcript into a chat box and ask for a customer update. It returns a clean, on-tone note with the right bullets, the right caveats, even a line that sounds like you. The first time that happens, most people feel a quick shift from “tool” to “teammate.”

That reaction matters because fluency feels like understanding. When something mirrors your intent, you stop checking as hard. In a real workflow, that can turn into a quiet habit: copying a summary into a doc, forwarding a support reply, or making a roadmap call off a confident-sounding explanation.

The problem is that “sounds right” and “is right” diverge more often than you want, even when the writing is excellent.

When it answers beautifully and still might be wrong

That divergence usually shows up when you ask for something that looks routine: “Summarize the customer’s main objections,” “Explain why churn spiked,” “List the plan limits.” The answer reads like a polished internal memo. It also might quietly slip in details that were never in your transcript, your dashboard, or your docs.

This happens most in the gaps: missing context, fuzzy wording, or questions that require a specific source of truth. If your notes mention “pricing confusion,” the model can confidently “clarify” which tier caused it. If you paste a ticket thread, it can invent a timeline that fits the tone. The writing stays smooth because it’s optimized to be plausible, not to be accountable.

If you treat every fluent answer like a verified brief, you’ll ship errors faster. The fix isn’t distrust; it’s knowing what kinds of prompts expose the cracks.

So is it reasoning, or just remixing what it has seen?

Those cracks tend to appear right where you expected “thinking” to carry the load. You ask a model to explain a churn spike, and it produces a tidy chain of causes and effects. Sometimes that chain is useful. Sometimes it’s a story that fits the shape of the question.

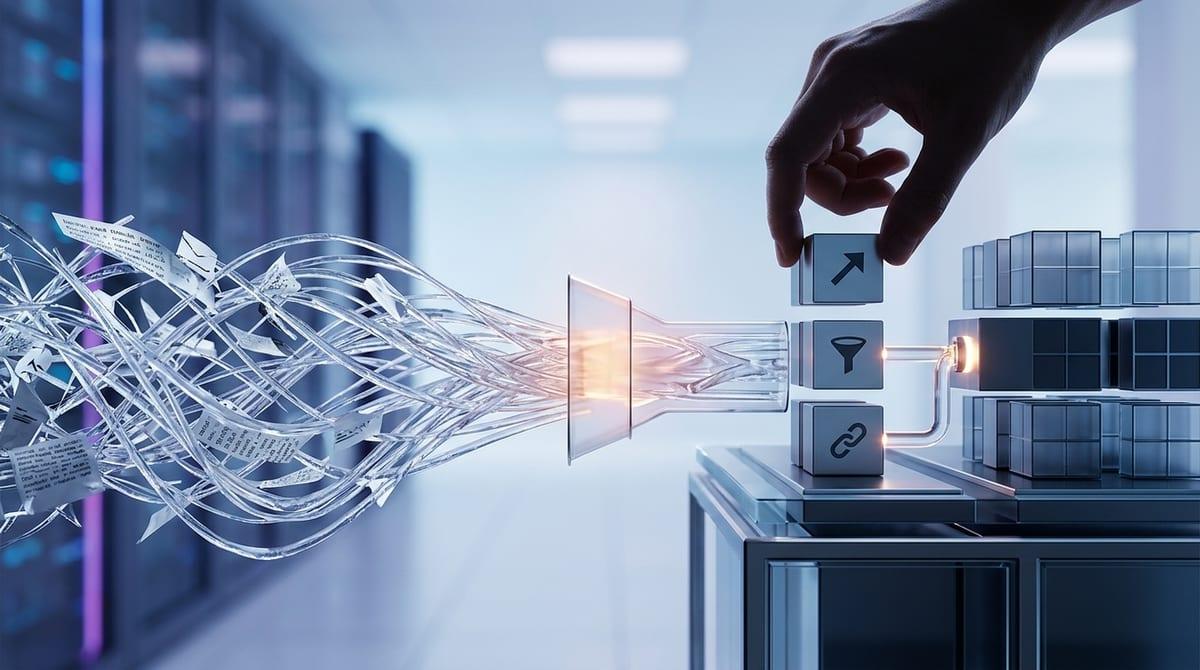

LLMs don’t pull from a single, grounded record of what happened. They predict the next most likely words given your prompt and what they’ve seen during training, which can mimic reasoning when the pattern matches your situation. Ask for a comparison table, and it often stays consistent because the format constrains it. Ask, “What’s the real reason?” and it may fill in missing pieces with whatever explanation is most common in similar writing.

The practical consequence is simple: “good logic” isn’t proof of “true facts.” You need a quick way to tell when it’s following your inputs versus inventing the bridge.

A simple stress test you can run in everyday work

In a real sprint, you rarely ask for “thought.” You ask for something like “What did the customer mean here?” or “Which plan does this refer to?” That’s exactly where the model can start quietly building bridges it wasn’t given.

A simple stress test is to force it to separate what it saw from what it inferred. Paste the same input and ask for three blocks: (1) “Direct quotes or exact phrases that support each claim,” (2) “Inferences I made to connect those quotes,” and (3) “Open questions or missing data I would need to be sure.” If it can’t produce solid text evidence, you’ve learned something fast: the answer is mostly a plausible fill-in, not a grounded read.

Then tighten the screws. Ask it to give two competing explanations that both fit the same evidence, and to say what would disconfirm each one. The friction is time: this takes longer than a single prompt, but it’s still far faster than discovering a confident mistake after you shipped it.

Where you can treat the model like a very fast drafting partner

Once you’ve separated evidence from inference, a pattern shows up: the model is strongest when you want a shape, not a verdict. Give it bounded inputs and a clear format, and it can draft faster than you can type—customer update emails, release notes from a changelog, a PRD first pass from a pile of bullets, or three variations of a support reply that match your tone.

The trick is to make “drafting” the job. Ask for structure, options, and language, then you supply the truth. For example: “Turn these five decisions into a one-page narrative for execs,” or “Write two versions: one direct, one more diplomatic.” If the source is your text, you’re mostly getting compression and phrasing, not invented facts.

The trade-off is hidden dependency. The cleaner the draft looks, the easier it is to forget what wasn’t in the input—so keep the handoff explicit: paste the draft into your doc with a quick note of what still needs a real check.

Where ‘sounding sure’ becomes a business risk

That “quick note of what still needs a real check” is easy to skip when the output reads like a finished decision. In practice, the riskiest moment isn’t when the model is obviously confused. It’s when it’s calm, specific, and certain—especially around numbers, policy, or timelines.

Put an LLM inside customer support, and it can answer with the right tone while citing a plan limit that’s outdated, or promising a refund path that doesn’t exist. Put it in product work, and it can turn a messy dashboard into a confident explanation for churn, then that explanation shows up in a slide deck as if it were measurement. The consequence is rework at best. At worst, it’s a customer commitment, a compliance issue, or a decision trail you can’t defend later.

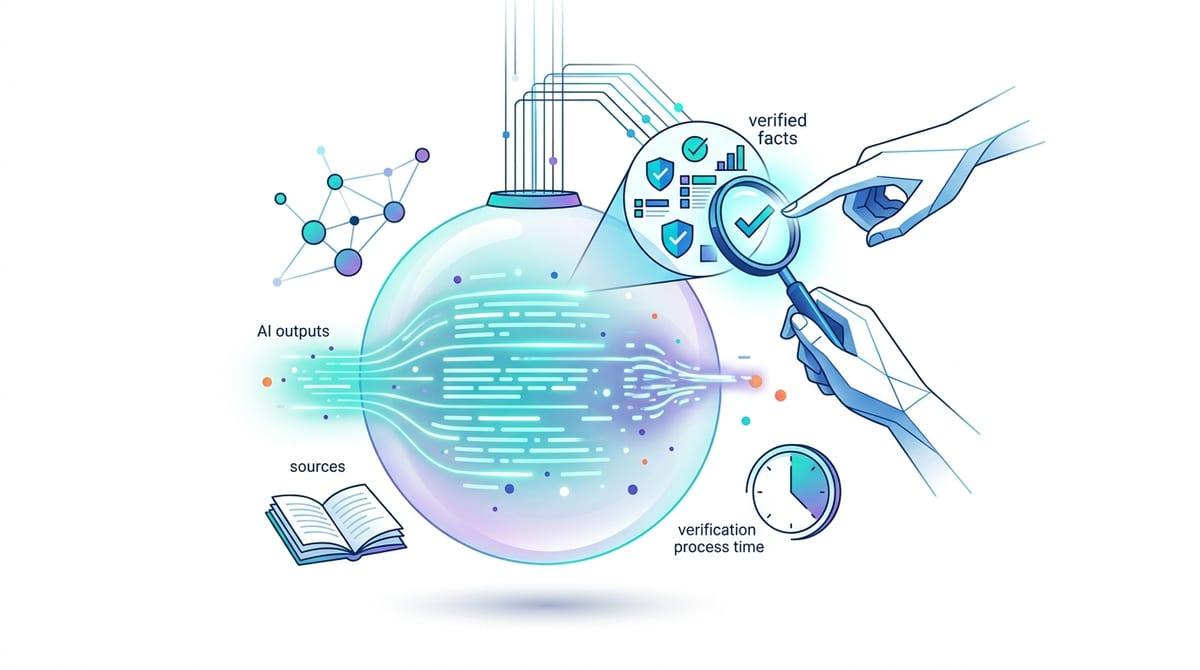

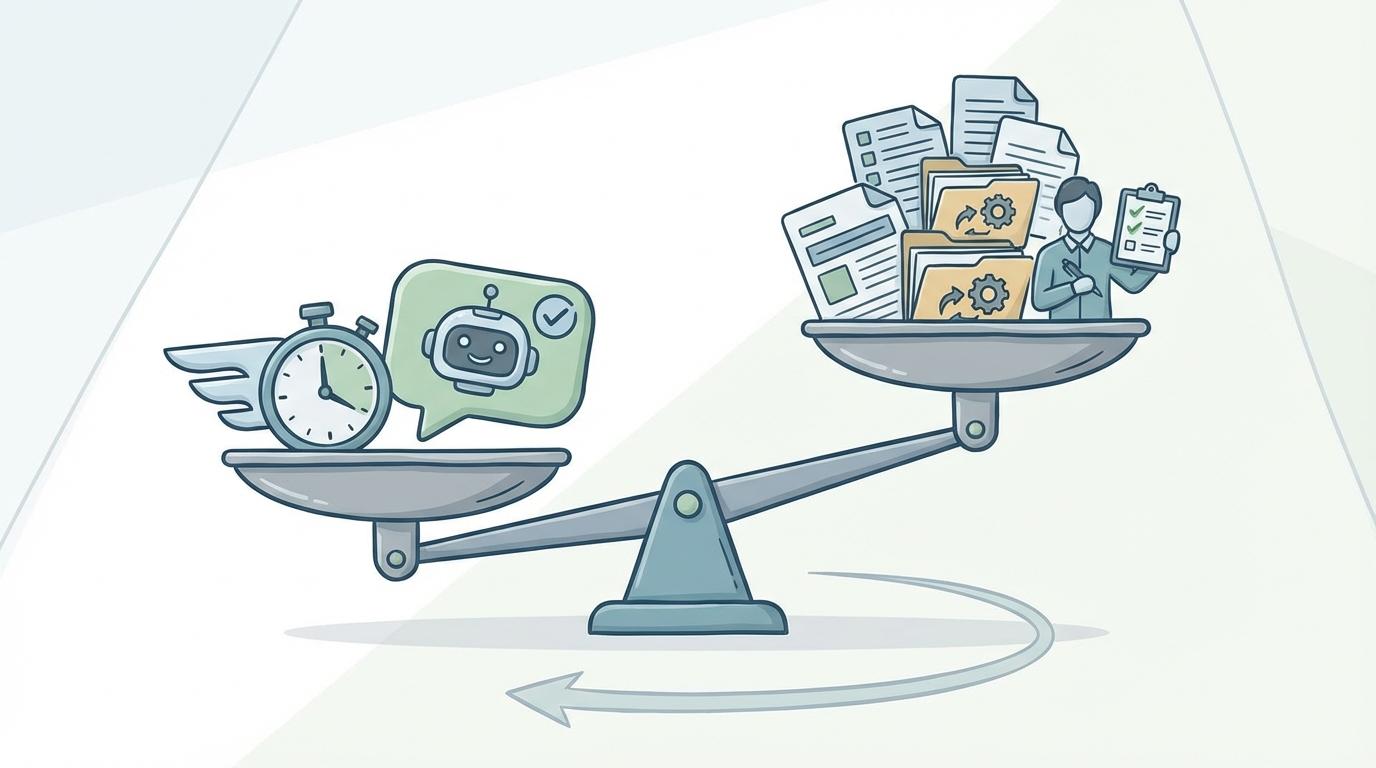

The practical trade-off is speed versus accountability. The more “authoritative” you let the model sound, the more you need hard constraints: require source links or quoted evidence for any factual claim, block it from inventing citations, and route high-stakes outputs through a human owner with a checklist. That stance choice gets real when you decide what, exactly, is allowed to ship without verification.

Picking an operational stance: useful simulation with deliberate guardrails

“Allowed to ship without verification” is the line you should draw on purpose, not by habit. A practical stance is to treat the model as a useful simulation: great at generating drafts and options, unreliable as a source of record. That mindset keeps you from confusing coherence with truth.

Then set guardrails that match the job. If the output could change money, policy, or customer commitments, require quoted evidence, linked sources, or a retrieval step from your own docs—and block sending anything that can’t show its inputs. If it’s pure drafting, let it run fast.

The friction is overhead: more checks, more steps, slightly slower cycles. The payoff is decisions you can defend.