When “the same question” keeps getting different answers

You ship an assistant that sounds steady in demos, then a week later two customers paste the “same” question into a ticket and get answers that don’t match. One response is cautious and policy-heavy; the other is confident and specific. Support flags it as inconsistency, Legal worries about commitments, and your team spends hours trying to reproduce what happened.

This usually starts because requests are never actually identical. A user changes one detail, omits a key constraint, or asks in a different mood (“can you” vs. “you must”). The model fills gaps with whatever context it has, and small differences snowball into different outputs. The hard part is deciding what “reliable” should mean before you try to enforce it.

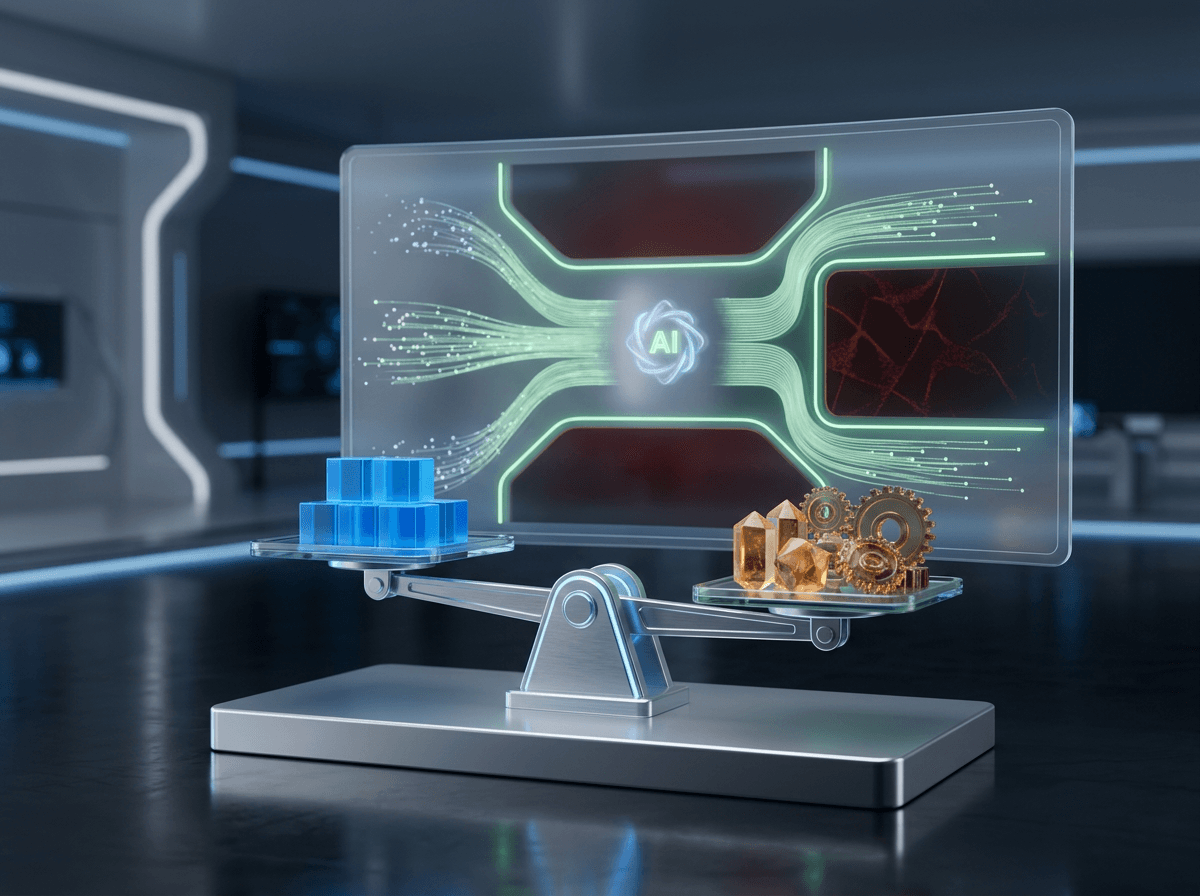

What stakeholders mean by “reliable” (and why they don’t agree)

Once you try to pin down what “reliable” means, the room splits fast. Support often means “gives the same steps every time,” so agents can reuse answers and customers don’t bounce back with “but last time you said…”. Legal usually means “never makes an unapproved promise,” even if that forces the assistant to be slower, more generic, or to refuse more often.

Product teams may mean “solves the problem,” even when the question is incomplete. Ops may mean “doesn’t create cleanup work,” like tickets that need re-triage because the assistant picked the wrong form or policy path. And brand may mean “sounds like us,” which can clash with compliance language that needs to be explicit.

If you chase repeatability, you’ll frustrate users with messy details; if you chase helpfulness, you’ll get variation that looks like drift. The next step is seeing how that variability shows up the moment users get messy.

Adaptability shows up the moment users get messy

That “moment users get messy” is usually a real chat: someone starts with “Need access,” then adds “for a contractor,” then says “actually it’s urgent, I’m locked out,” and drops a screenshot. If your assistant adapts to each new detail, it will change its path midstream—different questions, different form, different policy language—because it’s trying to reduce risk while still solving the problem.

Adaptability also kicks in when users don’t know what to ask for. A manager might say “set up payroll” when they really mean “add a new hire,” or a customer might ask “refund” when the right move is “exchange.” If the assistant treats those as fixed intents, it fails fast. If it re-interprets intent as the conversation evolves, answers can look inconsistent even when they’re correct.

More flexibility means more “it depends” logic, and that logic is hard to explain, test, and audit—especially when a user changes one small constraint late in the thread.

Where the tug-of-war actually comes from inside the stack

That late-thread constraint change is where your stack has to pick a side. If you try to “lock” behavior, you reach for things like templates, fixed decision trees, and retrieval that only pulls from approved sources. Those tools reduce variation, but they also narrow what the assistant can do when the user’s details don’t fit the expected slots.

If you instead let the model infer missing pieces, reinterpret intent, and rewrite its own plan as new info arrives, you get better outcomes on messy chats. But you also introduce multiple moving parts that can diverge: different retrieved documents, different tool outputs, different prompt assembly, and even different model sampling. Two users can ask the same question, but one triggers a policy snippet in retrieval while the other doesn’t, or one hits a tool error and the assistant “fills in” with a guess.

Retrieval optimizes for relevance, orchestration optimizes for flow, and the model optimizes for a plausible answer. The next decision is choosing which parts of that flow must never vary, and which parts should adapt.

Choose what must never vary vs what should adapt in your workflow

In practice, the team usually feels the pain when one user gets a crisp “here’s the process” and another gets a softer “it depends,” even though both were just trying to get access or fix billing. That’s your signal to draw a line between outputs you need to hold steady and the parts you can let flex. If you don’t, the assistant will vary wherever it finds room, and the variation will land on the most visible surfaces: tone, commitments, and next-step instructions.

Start by naming the “must never vary” pieces in the workflow. Think: what counts as an approved promise, what policy language must appear verbatim, what escalation triggers are non-negotiable, and what data the assistant must collect before it can act (like identity checks before account changes). Then decide what should adapt: how it asks clarifying questions, which examples it uses, and how it routes users when their first request is vague.

Every “never vary” rule you add needs upkeep when policies change, and it can force awkward conversations when a user’s situation doesn’t fit the allowed slots. Make the rigid parts small and high-impact, so the assistant can still handle the messy middle—and then you can talk guardrails.

If you allow flexibility, what guardrails keep it predictable?

Those “messy middle” moments are where teams usually loosen the assistant just enough to keep chats moving—then wonder why it starts sounding different from one thread to the next. A user adds “it’s for a contractor” and the assistant shifts from a simple access checklist to policy language, exceptions, and new questions. That flexibility is useful, but without boundaries it turns into output that feels random.

Keep it predictable by constraining the shape of decisions, not the exact words. Require a short classification step (issue type, risk level, and whether it can act or must escalate), then bind each class to fixed actions: required questions, approved snippets, and a clear “what happens next.” Let the assistant adapt inside the box—ordering questions, choosing examples, and mirroring user phrasing—while logging which class it picked and why.

If retrieval returns conflicting docs, or a tool times out, the assistant needs a forced fallback path (“I can’t verify, so I’m escalating”) instead of filling gaps. Once those guardrails exist, you can prove they hold under drift.

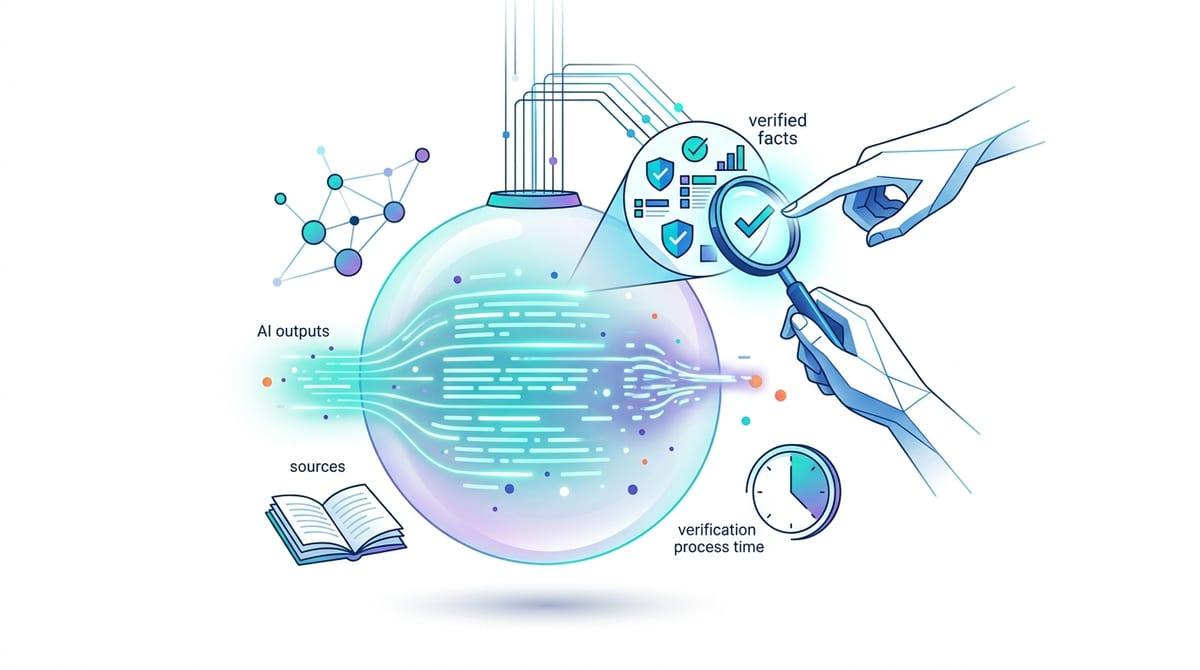

Proving it works: tests and monitoring that catch drift without freezing the assistant

Once you add a forced fallback path, you need to know it still fires when the system changes. Start with a small “golden set” of chats that cover your non-negotiables: promises, escalations, identity checks, and refusal language. Run them on every prompt, retrieval, or model change, and score both outcomes and structure (did it pick the right class, ask the required questions, and use the approved snippet).

Then watch production for slow drift. Sample conversations by class, track tool errors and fallback rates, and alert on new answer shapes (like skipping a required step) rather than wording. The hard cost is review time: someone has to label failures, update the golden set when policy changes, and resist “fixes” that make the assistant safe but useless.