You have fairness numbers—why does it still feel inconclusive?

You run the model, slice results by group, and the dashboard lights up with fairness metrics. One looks fine. Another looks bad. A third moves the “wrong” way when you improve accuracy.

It feels inconclusive because the numbers aren’t answering the same question. Each metric bakes in a different idea of what “fair” means: equal approval rates, equal error rates, equal chances for qualified people, or something else. If you don’t choose which idea matches your product risk, you’re just collecting scores.

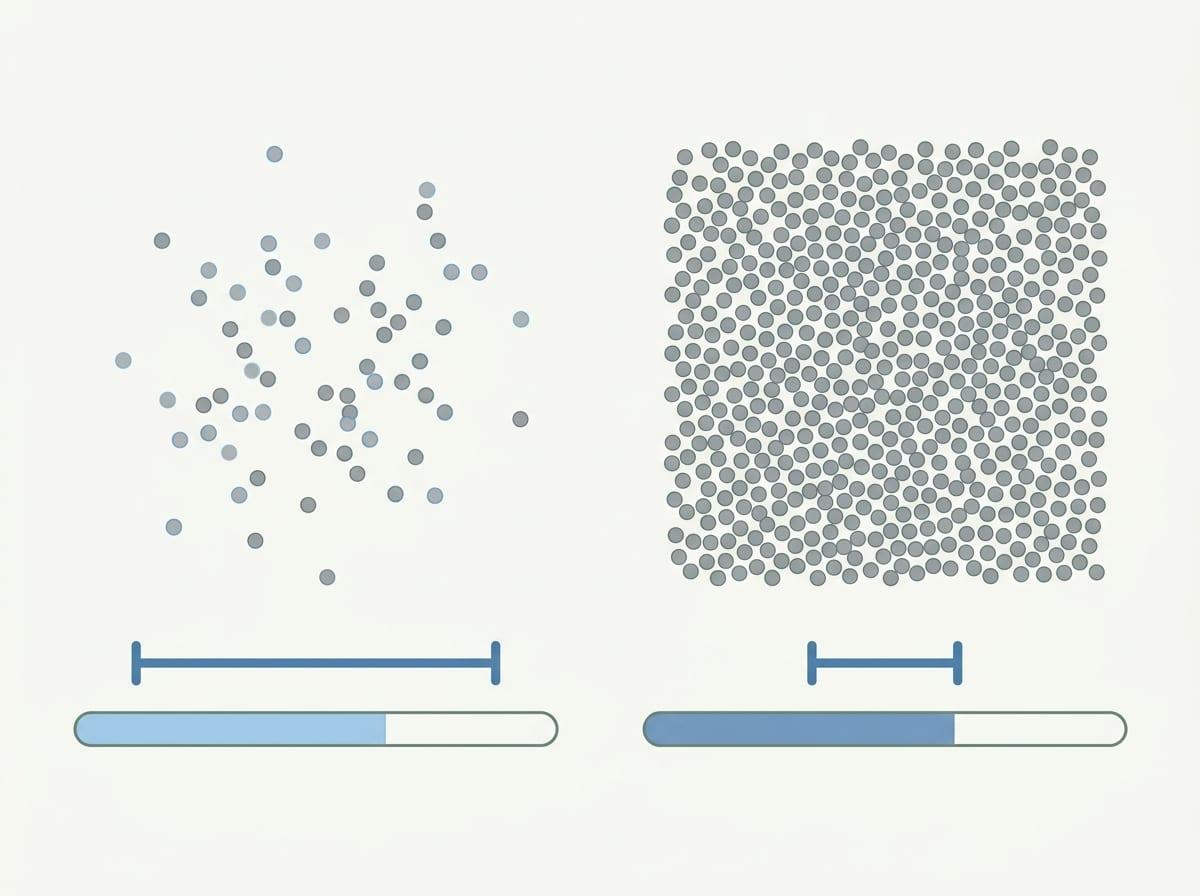

There’s also a practical constraint: small subgroups and rare outcomes make metrics noisy, so “improvement” can be a sampling artifact. Before picking a metric, you need a reference point: fair compared to what?

Fair compared to what: choosing the reference point before choosing a metric

Fair compared to what usually comes down to the alternative you’d accept if this model didn’t exist. In lending, is the reference point the prior underwriting rules, a human review team, or a legally defined policy? If you compare to last quarter’s model, “fairness improved” might just mean you copied its quirks. If you compare to humans, you need to admit what humans actually did, not what the process doc says.

Pick the reference point before the metric because it changes what you’re trying to hold constant. If the baseline is a fixed policy, you may care most about whether qualified applicants get similar chances across groups (a “who should pass” question). If the baseline is scarce manual review time, you may care more about equal error rates, because false positives and false negatives burn real ops budget in different ways.

The hard part is access: you may not have clean labels for “qualified,” or consistent logs of human decisions. When that data is missing, write down what you’re using as a proxy and what failure it could hide, then choose metrics that stress-test it.

What would be an unacceptable mistake in this product?

That “stress-test” only matters if you’re clear on what kind of failure would make you pull the plug. In most ML products, there’s one mistake that feels worse than the others, even if overall accuracy looks fine. A lending model that wrongly rejects qualified applicants creates a different harm than one that approves some borderline applicants who later default. A moderation model that wrongly removes legitimate content is a different problem than one that misses some violations.

Write down the unacceptable mistake in plain language, tied to a user story. “A qualified nurse gets screened out and never reaches a human reviewer.” “A legitimate small business loses payouts and can’t make payroll.” Then map that mistake to the error you’re trying to control: false negatives, false positives, or a ranking failure where the wrong cases never surface.

This is where the real-world constraint shows up: you rarely have perfect labels for “qualified” or “harm.” If your proxy is appeals, chargebacks, or downstream performance, say so—and accept that it will miss silent failures. Once you name the mistake, you can choose metrics that limit it across groups, even if other metrics move in the opposite direction.

When ‘treating people the same’ clashes with ‘achieving similar outcomes’

That “opposite direction” feeling often shows up when you try to do two different things at once: apply the same rule to everyone and still end up with similar outcomes across groups. In practice, “same rule” usually means one threshold, one ranking model, one review policy. It feels clean because nobody gets a special case.

But if groups come in with different base rates in your labels or different levels of measurement error in your inputs, one threshold won’t land the same way. A lending score calibrated on repayment can still produce different approval rates. A hiring model can use the same cutoff and still screen out one group more often if the resume signals you rely on are less available or less reliably recorded.

You can force similar outcomes by changing thresholds or adding policy guardrails, but that costs something concrete: more manual review, more false positives in the “helped” group, or a drop in overall recall. When those levers move, your metrics will split—use that split as a clue about what’s driving it.

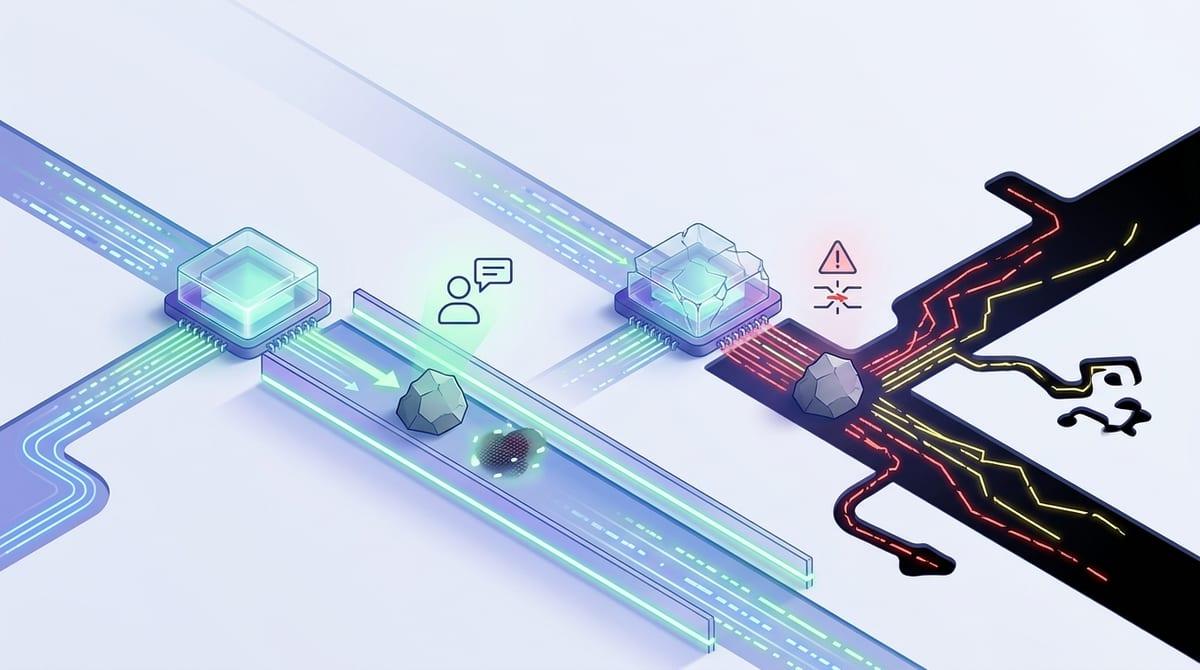

The moment metrics disagree: diagnosing the cause, not arguing the math

That split is usually the fastest way to see what changed in the system. You lower a threshold to reduce false negatives for the group you’re worried about, and now approval-rate parity looks “better” while false-positive parity looks worse. Don’t argue which metric is “right” yet. Ask what lever you pulled and which error it predictably shifts.

A simple diagnostic is to separate three causes: base rates, score quality, and policy. If one group has a different true rate in the label (or in your proxy label), then equalizing error rates and equalizing approval rates can’t both hold unless the model is near-perfect. If the model’s scores are noisier for one group—missing data, inconsistent signals, fewer examples—then you’ll see worse calibration or worse ranking there, and equalized odds will punish you harder. If policy adds steps (manual review, appeals, overrides), the post-policy outcomes can drift away from model-only metrics.

The constraint: you may not have enough subgroup volume to tell which cause dominates without wide error bars.

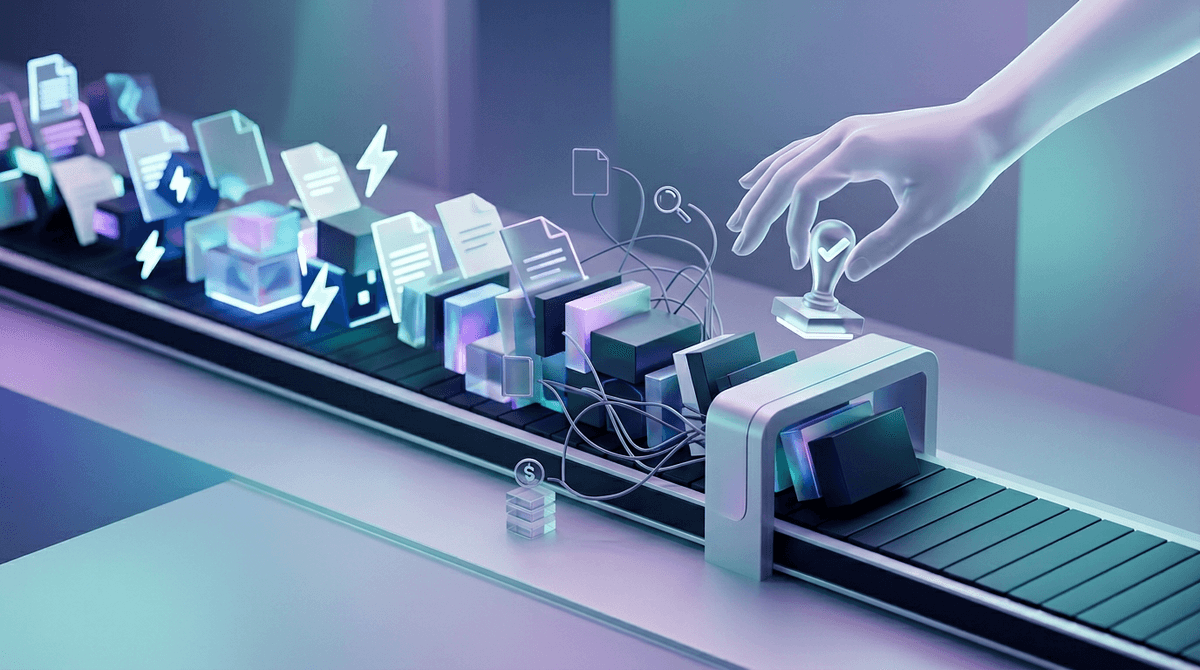

A minimal scoreboard you can defend to stakeholders

That “defensible” scoreboard starts with what you already do in reviews: ask whether the model is accurate enough, whether it breaks in one group, and whether the business impact is acceptable. Keep it to 4–6 numbers per major group, plus error bars.

Include (1) overall utility: AUC or PR-AUC, and the operating-point confusion matrix; (2) the unacceptable mistake: the gap in false negatives or false positives at the chosen threshold (whichever maps to your harm); (3) a “who gets through” view: selection/approval rate by group, so policy owners see distributional impact; (4) calibration-by-group (or observed vs. predicted buckets) so you don’t “fix” parity by making scores less truthful.

The downside is workload: this scoreboard needs stable labels and enough subgroup volume, and you’ll spend time defending confidence intervals. That effort pays off when you set ship thresholds and exceptions.

Turning results into a ship decision: thresholds, guardrails, and exceptions

That effort pays off when you stop debating “good” and start writing down “ship.” Pick the operating threshold the same way you would without fairness in the room: the point that hits your precision/recall (or cost) target. Then add fairness gates tied to your unacceptable mistake: for example, “false-negative rate gap between any two major groups must be under X points, with a 95% interval that doesn’t cross Y.” Make the gates few, numeric, and checked on the same time window.

Guardrails are the policy levers you’ll actually pull when a gate fails. Common ones are a manual-review band near the threshold, a second-pass model for low-signal cases, or a rule that blocks action when key fields are missing. Each has a concrete cost: more ops headcount, longer queues, or lower automation rate.

Write down allowed exceptions up front: low-volume groups with wide error bars, known label lag, or launch phases where you accept review-heavy operation. If you can’t describe the exception in one sentence, it’s a loophole.

After launch, what could drift—and what you’ll monitor to stay honest

If you can’t describe the exception in one sentence, it’s a loophole—and after launch, loopholes multiply because the system around the model changes. The applicant mix shifts, upstream data fields get renamed, reviewers learn new habits, and your label pipeline lags or backfills. Any of those can move group metrics even if the model weights never change.

Monitor the same small scoreboard on a fixed cadence, but add “canary” checks: missing-field rates by group, score distribution drift, calibration buckets, and the specific error gap tied to your unacceptable mistake. Expect pain: low-volume groups will swing week to week, so you’ll need longer windows or pooled rollups to avoid chasing noise. When a gate trips, log the cause, the policy lever you pulled, and when you’ll re-check.