Your “general-purpose” model hits a new use-case—what actually needs to change?

You ship a feature on a “general-purpose” model, then a new request lands: “Can it also handle invoices?” or “Can it do Spanish support?” The model might be capable, but your product still has to supply the right inputs, route the work to the right tools, and check the output before it reaches a user.

In practice, adaptability comes from four ingredients: the model you picked (and what it was trained to do), the way you steer it (prompting and tool calls), the data you can reliably fetch at runtime (docs, customer records, policies), and the system around it (logging, evals, fallbacks). If any one is weak, “just add a new use-case” turns into a scramble.

A new task often means new data contracts, redaction rules, and latency budgets—plus time to label examples and set up tests. The sooner you can tell whether a change is “prompt-only” or “pipeline-and-product,” the less you’ll rebuild under pressure.

When a prompt tweak works… and when it’s a warning sign

That “prompt-only” test usually starts the same way: someone adds a few lines of instructions, the demo looks better, and the team wants to ship. Sometimes that’s exactly right—especially when the new use-case is mostly a formatting change, a tone shift, or a clearer definition of what counts as “done.” If the model already has the needed knowledge and you can confirm it with a small set of real inputs, a prompt tweak can buy you weeks.

It’s a warning sign when the prompt starts carrying business logic. If you need a long list of exceptions (“unless the customer is enterprise,” “unless the region is EU,” “unless it’s an invoice over $5k”), you’re encoding rules you can’t easily test or version. The output may look stable in a few examples, then fail on edge cases like partial documents or missing fields.

A quick check: does success depend on information the model can’t reliably infer? If yes, you’re not fixing a prompt—you’re missing retrieval, tooling, or structured inputs, and that’s where the work will move next.

What kind of model are you really buying: base, instruct, tool-using, or fine-tuned?

That shift toward retrieval, tooling, or structured inputs is where “model choice” stops sounding abstract. A base model gives you raw capability, but it won’t reliably follow your process; you’ll spend time fighting inconsistent formats and silent rule breaks. An instruct model is trained to follow directions, so it’s usually the safest default for product work, but it can still guess when you needed it to say “I don’t know.”

A tool-using setup is less about the model and more about the contract: when to call search, when to fetch a customer record, how to pass results back, and what to do when a tool fails. This often makes new use-cases feel easier, but it raises plumbing costs—permissions, rate limits, retries, and monitoring.

Fine-tuning makes sense when you need a consistent style or decision boundary at scale, like classifying tickets into your exact taxonomy. It also locks you into data collection and ongoing retrains, which is where “easy to add” can quietly turn into a backlog.

The moment you realize the bottleneck isn’t the model—it’s your data pipeline

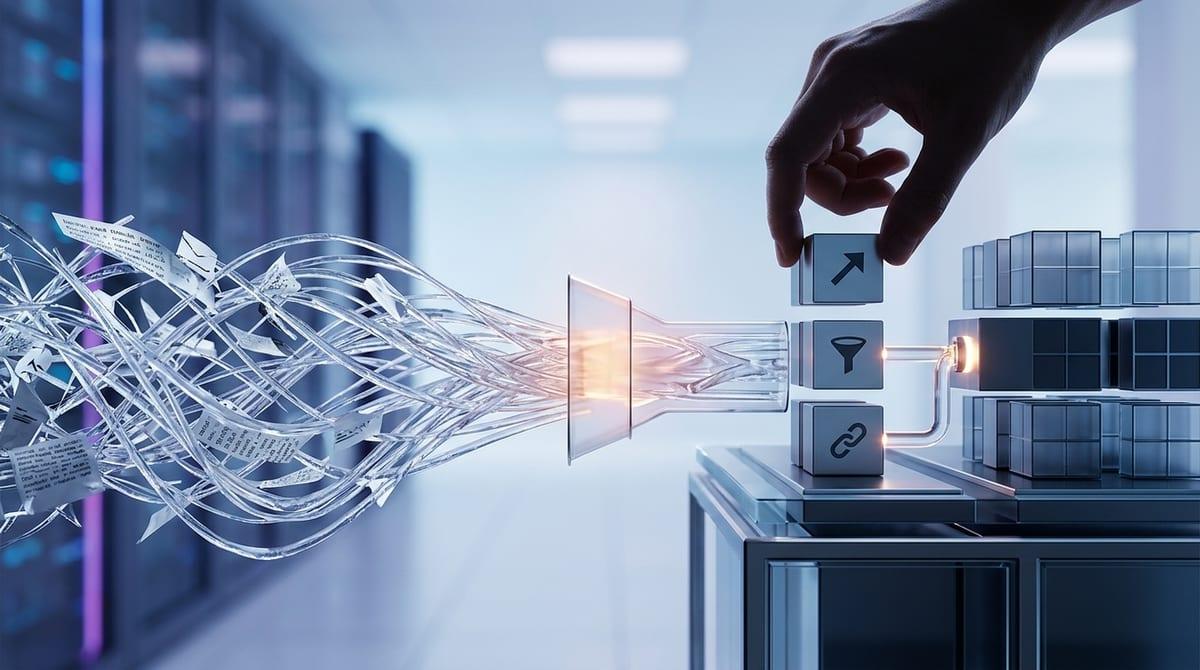

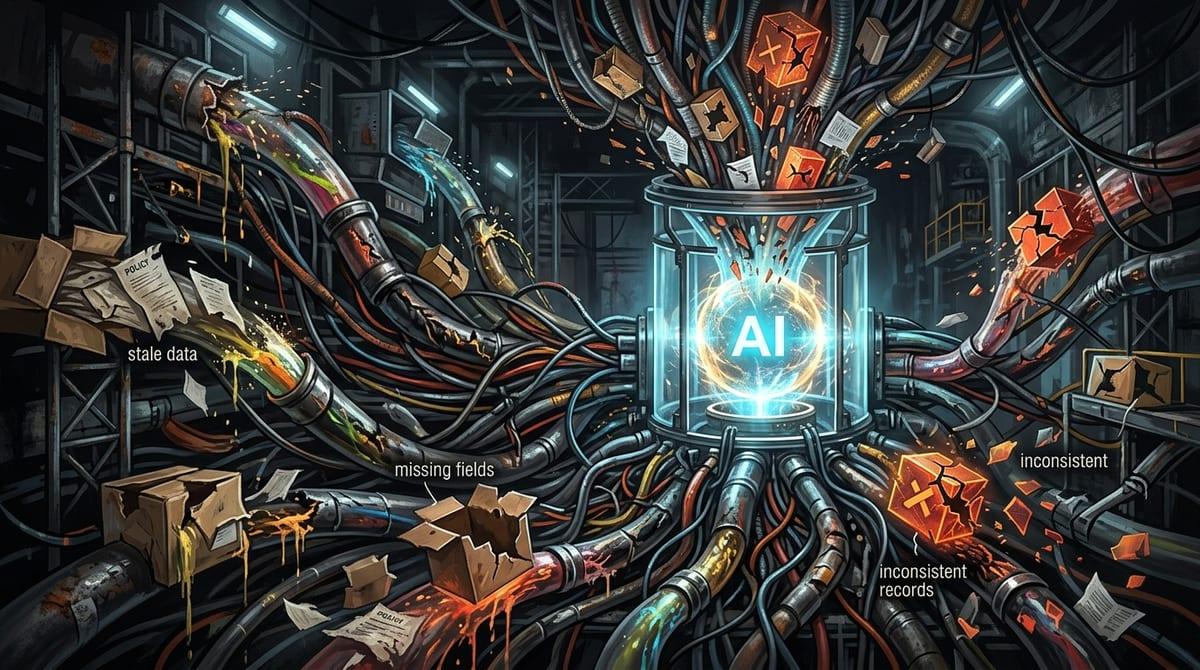

That backlog usually shows up right after a “successful” prototype: the model answers well when you paste clean context into a prompt, then it falls apart in the product because the context is incomplete, stale, or missing entirely. You realize the real question isn’t “can the model do invoices?” It’s “can we reliably pull the right invoice data, policy rules, and customer metadata in under a second, every time?”

Most teams hit the same failure mode: the pipeline can’t produce a stable input contract. One week “customer tier” lives in CRM, the next week it’s in a billing table, and half the records disagree. Retrieval then returns a mix of old PDFs, duplicated docs, and internal notes with sensitive fields you can’t send. The model looks inconsistent, but it’s reacting to inconsistent inputs.

Fixing this costs real time: permissions, redaction, chunking, indexing, and a way to trace every output back to the exact sources used. Until you can answer “what did we show the model?” adding the next task will keep feeling like gambling.

Adding a new task without breaking the old ones: guardrails, evals, and blast radius

Once you can answer “what did we show the model?”, the next surprise is that a change for Task B can quietly damage Task A. You adjust retrieval to pull more policy text for invoices, and suddenly support replies get longer, cite the wrong source, or leak internal phrasing because the context mix changed. The model didn’t “regress” on its own—you changed its inputs and the rules around it.

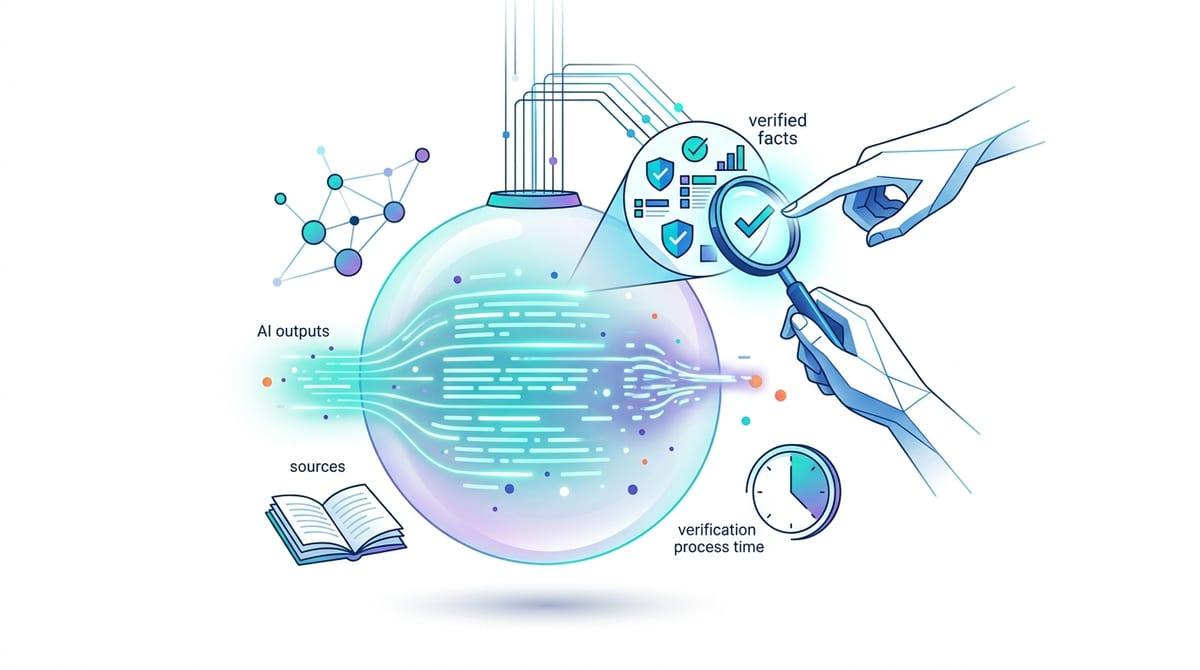

Guardrails are how you keep that damage small. Treat each task like a contract: required fields, allowed tools, banned outputs, and a fallback when inputs are missing. Then enforce it with validators (schema checks, citation requirements, PII filters) before anything hits a user.

Evals make it real. Keep a small, fixed set of representative examples per task and run them on every prompt, retrieval, or routing change. You’ll argue about expected answers, label edge cases, and maintain test data as policies and products change. The payoff is knowing your blast radius before customers do.

System design choices that make new capabilities feel like “plugins”

Once you’ve seen a retrieval or routing change knock over an older task, you start designing so new work can attach without rewiring the whole app. In practice, that means separating “what the user wants” from “how the system executes”: a front-door intent step, a task router, and then a task module with its own prompt, tools, and validators. If invoices need an OCR tool and billing lookups, that module owns those calls; support replies don’t even see them.

The plugin feeling comes from stable contracts. Define a shared input envelope (user message, locale, customer tier, allowed data scopes), then let each task add its own required fields and tool permissions. Pair that with per-task logging and eval sets, so you can ship Task B behind a flag and watch failures without drowning in Task A traffic.

More interfaces to maintain, more permission boundaries to get right, and more places latency can creep in. The next pressure test is vendor claims—what’s actually modular, and what’s bundled together.

A practical checklist for judging vendors—and planning your next task

That’s where vendor demos can mislead: a “modular” story might still mean one big prompt, one shared retrieval index, and one set of logs you can’t split by task. Ask to see the boundaries in practice. Can you define per-task tool permissions, schemas, and fallbacks without forking the whole app? Can you run evals per module, and trace any output back to exact sources, prompts, and tool calls?

Then plan the next task like a small launch, not a tweak: what new runtime data is required, where it comes from, who approves access, and what happens when it’s missing. Budget for boring costs—redaction, latency, retries, and test cases—because that’s where “general-purpose” usually breaks.