You’re told to “get more done” — but you can’t see where the day actually goes

You get the message in a staff meeting: “We need more throughput with the same team.” Then you look at your dashboards and they tell you almost nothing about the work people actually do all day.

A case starts in email, moves to a ticket, gets “quickly” checked in a spreadsheet, and waits on an approval in a separate system. By the time it closes, the lagging KPI looks fine, but it hides the loops: re-entered data, missing info, handoffs that stall, and exceptions handled in side chats.

Trying to fix this by asking for more status updates costs time and can feel like policing. The real problem is simple: you can’t improve what you can’t reliably see.

When is process intelligence the right tool (and when it’s just another dashboard)?

When you can’t reliably see the work, the usual response is to add a new report. It rarely helps, because it still depends on people labeling work the same way and updating fields on time.

Process intelligence is the right tool when the work leaves a trail across systems and handoffs, and you need to answer questions like: Where do cases wait? Where do they bounce back? Which queues create the most rework? If a customer refund touches email, a CRM, and billing, you can measure the actual path, not the “happy path” in a slide.

It turns into “just another dashboard” when your main issue is policy, staffing, or training—and the data won’t capture the real cause anyway (like unclear ownership or approvals done in chat). In those cases, better decisions come from tightening the decision rules first, then measuring.

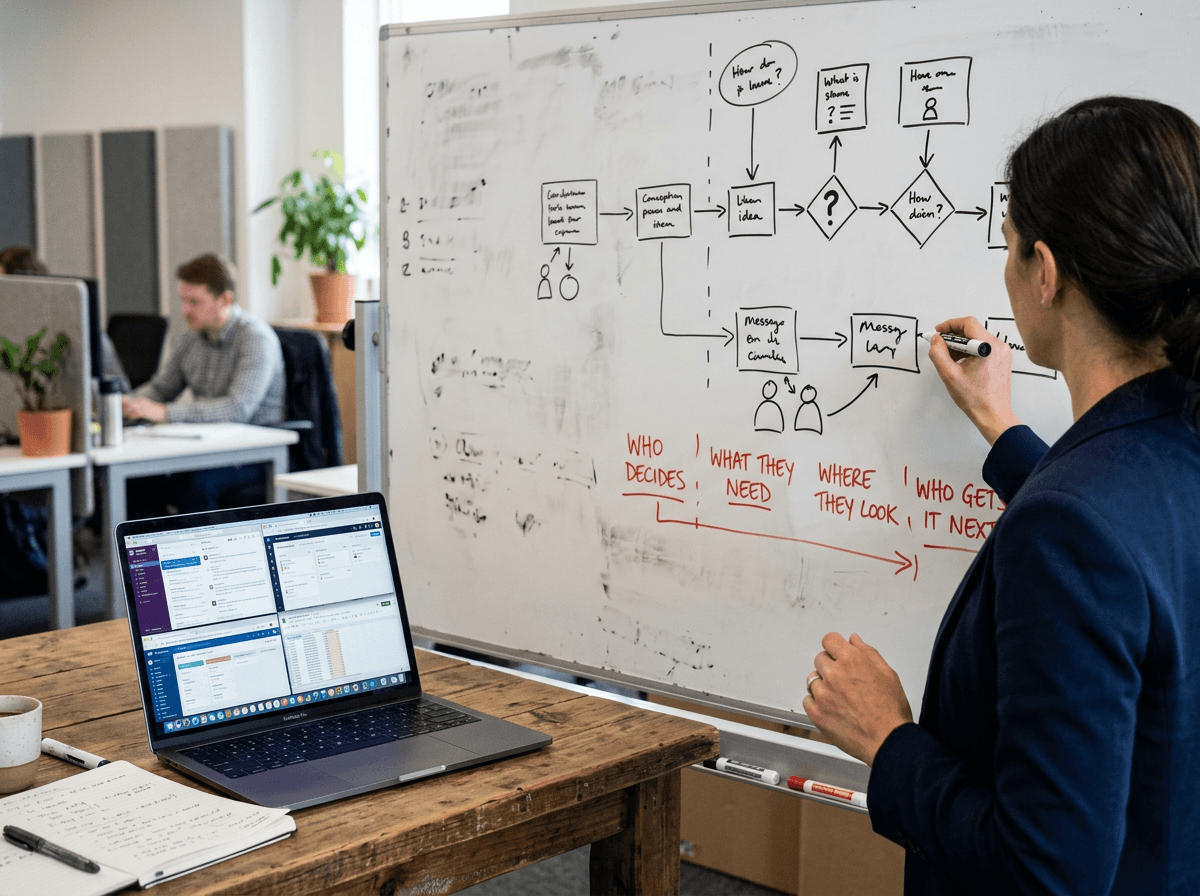

The first “map” you need isn’t a BPMN diagram—it's a list of decisions, handoffs, and systems

Tightening decision rules starts with writing down where decisions happen, not drawing a perfect flowchart. In most ops teams, the real path isn’t “step 1 to step 7.” It’s “Who decides, what do they need, where do they look, and who gets it next?”

Build a simple map you can finish in an hour: the top 10 decisions (approve, reject, request info), the handoffs between roles or teams, and the systems touched at each point (email, ticketing, CRM, ERP, spreadsheet). Add the common “escape hatches” too: side chats, calls, and manual notes. If a refund gets stuck, you want to know whether it’s waiting on data, waiting on a person, or waiting on a system queue.

The hard part is scope. If you try to map everything, you’ll stall. If you map too little, you won’t know what to measure next.

Where productivity leaks hide in plain sight: waiting, rework, and invisible work

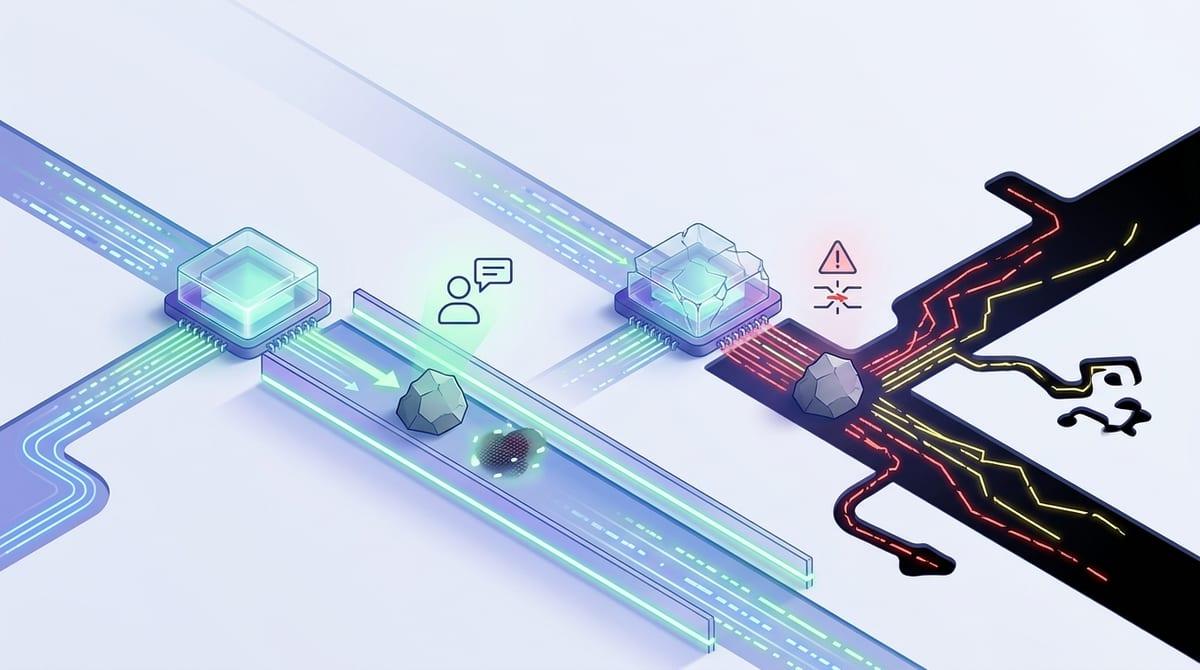

Once you’ve scoped the map, the leaks usually show up in places no one reports on: time spent waiting, work that comes back, and work that never becomes a “case” at all. A ticket can sit for two days because the next approver only checks a queue twice a week. That delay doesn’t look like effort, but it stretches cycle time and forces people to context-switch when it finally moves.

Rework is the second leak. If a form gets rejected for missing info, the same data gets retyped, reattached, and re-explained across email, CRM notes, and a spreadsheet “tracker.” Process intelligence helps when you can tag these loops to specific decision points, teams, or systems—so you can fix the cause, not coach people to “be careful.”

Invisible work is harder: escalations in chat, shadow logs, “quick calls,” and manual reconciliations. You won’t capture all of it automatically, which means you’ll need a light way to sample it without turning it into extra bureaucracy—then pick where to measure first.

“Will this feel like surveillance?” — designing for trust before you measure anything

When you “pick where to measure first,” people usually ask a sharper question: what, exactly, are you measuring about them? If the rollout sounds like “we’re going to see who’s slow,” you’ll get quiet resistance—extra notes in chat, work done off-system, and fewer honest exceptions logged. The data gets worse right when you need it most.

Start by designing for trust before you connect any logs. Be explicit about the unit of analysis: paths, queues, and handoffs—not individual performance. Put it in writing. Share a short list of questions you’re trying to answer (where cases wait, where they bounce, which systems cause re-entry), and a short list of what you will not do (rank people, track keystrokes, use it for discipline). Then involve a few frontline reps to sanity-check the story against real work.

This still costs time: legal review, works council or HR alignment, and careful data access controls can slow the start by weeks.

Picking 2–3 use cases that actually move headcount-free capacity

People will support the work when the target is specific and the “win” shows up in their week, not in a steering deck. Start by listing the moments that force repetition or waiting: missing-info loops, approvals that bounce, duplicate entry between two systems, or a queue that sits until someone remembers to check it.

Then filter hard. A good use case has (1) enough volume to matter, (2) a measurable path across systems, and (3) a lever you can actually pull—like changing required fields, routing rules, or who can approve what. “Reduce cycle time” is too broad; “cut refund rework caused by missing order IDs by 30%” is concrete.

Expect limits. Some pain points are real but won’t show in logs (calls, side chats), and some fixes require IT capacity you don’t have this quarter. Pick 2–3 you can change within 30–60 days, and write down what decision you’ll make if the data confirms the pattern.

A low-risk pilot that produces changes—not just insights

Writing down what decision you’ll make is what keeps a pilot from becoming a “learning exercise” that never changes anything. Pick one use case, one process slice, and one agreed outcome, like “reduce missing-info returns in refunds” or “cut approval waiting time in onboarding.” Then set a short window—two to four weeks—so you’re forced to act on what you find.

Keep the pilot lightweight on purpose. Use a small group of real cases, connect only the systems you need, and run a weekly review with the people who do the work. If the data shows a loop, don’t stop at the chart—change one rule, one field, or one routing step and watch whether the path actually changes the next week.

The hard part is access and change control. Even “small” fixes can require IT time, security reviews, and approvals from other teams, so get a named owner for each change before you start. Once you can ship one improvement end-to-end, you’re ready to lock in new ways of working and keep momentum.

Turning findings into new ways of working (and keeping momentum)

Once you can ship one improvement end-to-end, the risk shifts: people slip back to the old path when the next fire hits. Lock in the change where work actually happens—update required fields, routing rules, templates, and “definition of done,” not a slide. If the fix depends on someone remembering, it won’t hold.

Keep momentum with a tight cadence: a 15-minute weekly review of one metric and one example case, with a named owner who can approve the next tweak. Expect pushback from change control and downstream teams; even small adjustments can get stuck in someone else’s queue. Make the next use case start date part of the commitment, not a “when we have time.”